If you were upgraded to Managed Fusion 5.9.12 and rolled back to Managed Fusion 5.9.9, you will be upgraded to the re-released Managed Fusion 5.9.12.

What’s new

Improved relevance in large documents with chunking

Fusion 5.9.12 introduces document chunking, a major advancement for search and generative AI quality and performance. Chunking breaks large documents into smaller, meaningful segments—called chunks—that are stored and indexed using Solr’s block join capabilities. Each chunk captures either lexical content (for keyword-based search) or semantic vectors (for neural search), enabling Fusion to retrieve the most relevant part of a document, rather than treating the document as a single block. This improves:- Search relevance: Users get results that point to the most relevant sections within large documents, not just documents that match overall.

- Neural search precision: Vector chunks improve hybrid scoring by aligning semantic relevance with specific lexical content.

- Scalability and maintainability: Updates or deletions are applied at the chunk level, ensuring consistency and avoiding stale or orphaned content.

- Faceted search and UX: Results can be grouped and ranked more accurately, especially in use cases where dense documents contain multiple topics.

- A new LWAI Chunker index pipeline stage uses one of the available chunking strategies (chunkers) for the specified LW AI model to provide optimized storage and retrieval. The chunkers asynchronously split the provided text in various ways such as by sentence, new line, semantics, and regular expression (regex) syntax.

- The Chunking Neural Hybrid Query pipeline stage now detects chunked documents and retrieves the most relevant lexical and vector segments for hybrid search.

- Updates and deletions now ensure consistent chunk synchronization to prevent orphaned data.

- This feature includes a new Lucidworks AI Async Chunking API.

Contact your Lucidworks account manager to confirm that your license includes this feature.

Model hosting with Ray

Managed Fusion 5.9.12 introduces support for model hosting with Ray, replacing the previous Seldon-based approach. Ray offers a more scalable and efficient architecture for serving machine learning models, with native support for distributed inference, autoscaling, and streamlined deployment. This transition simplifies Managed Fusion’s AI infrastructure, enhances performance, and aligns with modern MLOps practices to make deploying and managing models faster, more reliable, and easier to monitor. For more information, see Develop and deploy a machine learning model with Ray.- The Seldon vectorize stages have been renamed to Ray/Seldon Vectorize Field and Ray/Seldon Vectorize Query.

- There are two new jobs for deploying models with Ray:

Develop and deploy a machine learning model with Ray

Develop and deploy a machine learning model with Ray

This tutorial walks you through deploying your own model to Fusion with Ray.A real instance of this class with the In the preceding code, logging has been added for debugging purposes.The preceding code example contains the following functions:In the preceding example, the Python file is named Any recent ray[serve] version should work, but the tested value and known supported version is 2.42.1.

In general, if an item was used in an Using the example model, the terminal commands would be as follows:This repository is public and you can visit it here: e5-small-v2-ray

This feature is only available in Fusion 5.9.x for versions 5.9.12 and later.

Prerequisites

- A Fusion instance with an app and indexed data.

- An understanding of Python and the ability to write Python code.

- Docker installed locally, plus a private or public Docker repository.

- Ray installed locally:

pip install ray[serve]using the version of ray[serve] found in the release notes for your version of Managed Fusion. - Code editor; you can use any editor, but Visual Studio Code is used in this example.

- Model: intfloat/e5-small-v2

- Docker image: e5-small-v2-ray

Tips

- Always test your Python code locally before uploading to Docker and then Fusion. This simplifies troubleshooting significantly.

- Once you’ve created your Docker you can also test locally by doing

docker runwith a specified port, like 9000, which you can thencurlto confirm functionality in Fusion. See the testing example below. - If you previously deployed a model with Seldon, you can deploy the same model with Ray after making a few changes to your Docker image as explained in this topic. To avoid conflicts, deploy the model with a different name. When you have verified that the Ray model is working after deployment with Ray, you can delete the Seldon model using the Delete Seldon Core Model Deployment job.

- If you run into an issue with the model not deploying and you’re using the ‘real’ example, there is a very good chance you haven’t allocated enough memory or CPU in your job spec or in the Ray-Argo config.

It’s easy to increase the resources. To edit the ConfigMap, run

kubectl edit configmap argo-deploy-ray-model-workflow -n <namespace>and then find theray-headcontainer in the artisanal escaped YAML and change the memory limit. Exercise caution when editing because it can break the YAML. Just delete and replace a single character at a time without changing any formatting.- For additional guidance, see the testing locally e5-model example.

Intro to Machine Learning in Fusion

The course for Intro to Machine Learning in Fusion focuses on using machine learning to infer the goals of customers and users in order to deliver a more sophisticated search experience.

Local testing example

- Docker command:

- Curl to hit Docker:

- Curl model in Fusion:

- See all your deployed models:

- Check the Ray UI to see Replica State, Resources, and Logs.

If you are getting an internal model error, the best way to see what is going on is to query via port-forwarding the model.

TheMODEL_DEPLOYMENTin the command below can be found withkubectl get svc -n NAMESPACE. It will have the same name as set in the model name in the Create Ray Model Deployment job.Once port-forwarding is successful, you can use the below cURL command to see the issue. At that point your worker logs should show helpful error messages.

Download the model

This tutorial uses thee5-small-v2 model from Hugging Face, but any pre-trained model from https://huggingface.co will work with this tutorial.If you want to use your own model instead, you can do so, but your model must have been trained and then saved though a function similar to the PyTorch’s torch.save(model, PATH) function.

See Saving and Loading Models in the PyTorch documentation.Format a Python class

The next step is to format a Python class which will be invoked by Fusion to get the results from your model. The skeleton below represents the format that you should follow. See also Getting Started in the Ray Serve documentation.e5-small-v2 model is as follows:This code pulls from Hugging Face. To have the model load in the image without pulling from Hugging Face or other external sources, download the model weights into a folder name and change the model name to the folder name preceded by

./.__call__: This function is non-negotiable.init: Theinitfunction is where models, tokenizers, vectorizers, and the like should be set to self for invoking.

It is recommended that you include your model’s trained parameters directly into the Docker container rather than reaching out to external storage insideinit.encode: Theencodefunction is where the field or query that is passed to the model from Fusion is processed.

Alternatively, you can process it all in the__call__function, but it is cleaner not to.

Theencodefunction can handle any text processing needed for the model to accept input invoked in itsmodel.predict()or equivalent function which gets the expected model result.

Use the exact name of the class when naming this file.

deployment.py and the class name is Deployment().Create a Dockerfile

The next step is to create a Dockerfile. The Dockerfile should follow this general outline; read the comments for additional details:Create a requirements file

Therequirements.txt file is a list of installs for the Dockerfile to run to ensure the Docker container has the right resources to run the model.

For the e5-small-v2 model, the requirements are as follows:import statement in your Python file, it should be included in the requirements file.To populate the requirements, use the following command in the terminal, inside the directory that contains your code:Build and push the Docker image

After creating theMODEL_NAME.py, Dockerfile, and requirements.txt files, you need to run a few Docker commands.

Run the following commands in order:Deploy the model in Fusion

Now you can go to Fusion to deploy your model.When deploying your Ray model, you have two options for handling traffic:- Use a single deployment. Deploy one model job that handles both indexing and query traffic. This is simpler to manage and requires only one deployment.

- Use separate deployments for indexing and querying. Deploy two separate model jobs: one dedicated to indexing and another for query traffic. This approach eliminates the risk of indexing workloads impacting query response times, providing better performance isolation and independent scaling control.

EXAMPLE_MODEL_INDEX and EXAMPLE_MODEL_QUERY). Use the index-specific model in your index pipeline stages and the query-specific model in your query pipeline stages. To keep both deployments in sync, ensure both jobs use the exact same model name, Ray deployment import path, Docker repository, and image name.- In Fusion, navigate to Collections > Jobs.

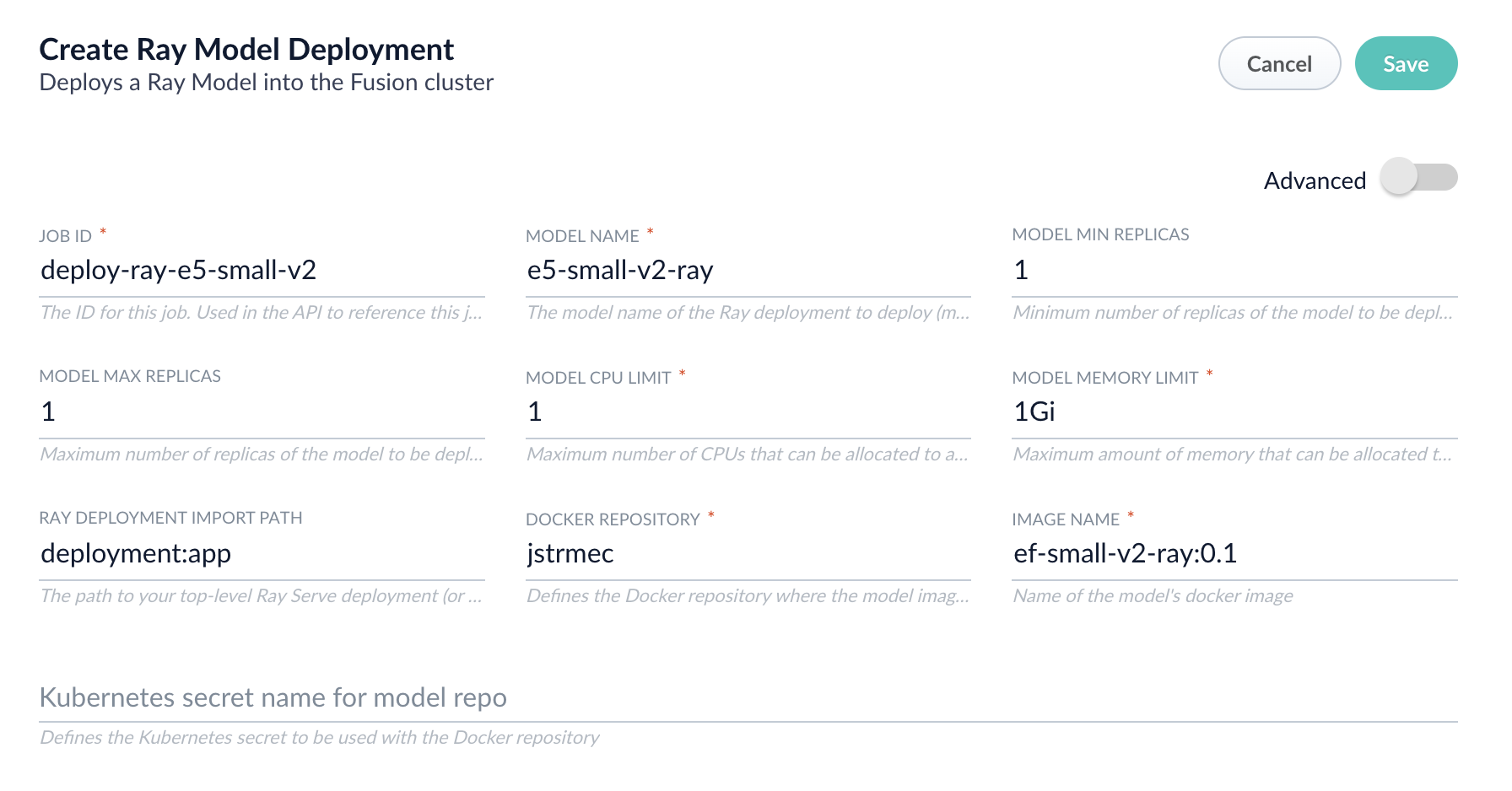

- Add a job by clicking the Add+ Button and selecting Create Ray Model Deployment.

-

Fill in each of the text fields:

Parameter Description Job ID A string used by the Fusion API to reference the job after its creation. Model name A name for the deployed model. This is used to generate the deployment name in Ray. It is also the name that you reference as a model-idwhen making predictions with the ML Service.Model min replicas The minimum number of load-balanced replicas of the model to deploy. Model max replicas The maximum number of load-balanced replicas of the model to deploy. Specify multiple replicas for a higher-volume intake. Model CPU limit The number of CPUs to allocate to a single model replica. Model memory limit The maximum amount of memory to allocate to a single model replica. Ray Deployment Import Path The path to your top-level Ray Serve deployment (or the same path passed to serve run). For example,deployment:appDocker Repository The public or private repository where the Docker image is located. If you’re using Docker Hub, fill in the Docker Hub username here. Image name The name of the image. For example, e5-small-v2-ray:0.1.Kubernetes secret If you’re using a private repository, supply the name of the Kubernetes secret used for access. -

Click Advanced to view and configure advanced details:

Parameter Description Additional parameters. This section lets you enter parameter name:parametervalue options to be injected into the training JSON map at runtime. The values are inserted as they are entered, so you must surround string values with". This is the sparkConfig field in the configuration file.Write Options. This section lets you enter parameter name:parametervalue options to use when writing output to Solr or other sources. This is the writeOptions field in the configuration file.Read Options. This section lets you enter parameter name:parametervalue options to use when reading input from Solr or other sources. This is the readOptions field in the configuration file. -

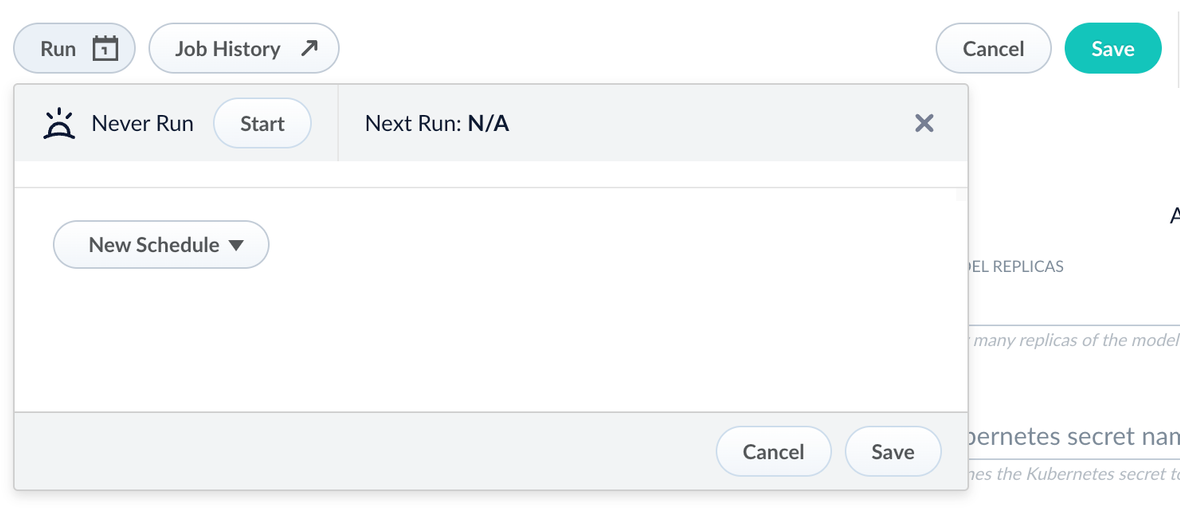

Click Save, then Run and Start.

Configure the Fusion pipelines

Your real-world pipeline configuration depends on your use case and model, but for our example we will configure the index pipeline and then the query pipeline.Configure the index pipeline- Create a new index pipeline or load an existing one for editing.

- Click Add a Stage and then Machine Learning.

- In the new stage, fill in these fields:

- The model ID

- The model input

- The model output

- Save the stage in the pipeline and index your data with it.

- Create a new query pipeline or load an existing one for editing.

- Click Add a Stage and then Machine Learning

- In the new stage, fill in these fields:

- The model ID

- The model input

- The model output

- Save the stage and then run a query by typing a search term.

- To verify the Ray results are correct, use the Compare+ button to see another pipeline without the model implementation and compare the number of results.

AI and machine learning features

Managed Fusion’s machine learning services now run on Python 3.10 and Java 11, bringing improved performance, security, and compatibility with the latest libraries. These upgrades enhance model execution speed, memory efficiency, and long-term support, ensuring Managed Fusion’s machine learning capabilities remain optimized for your evolving AI workloads. No configuration changes are required to take advantage of these improvements.Improved prefiltering support for Neural Hybrid Search (NHS)

Managed Fusion 5.9.12 introduces a more robust prefiltering strategy for Neural Hybrid Search, including support for chunked document queries. The new approach ensures that security filters and other constraints are applied consistently and efficiently to KNN queries—improving precision, maintaining performance, and avoiding previous compatibility issues with Solr query syntax.Bug fixes

-

Fixed an issue where ConfigSync removed job schedules during upgrade.

In some cases, upgrading to Managed Fusion 5.9.11 could result in ConfigSync removing job schedules from the cluster without consistently reapplying them, leading to missing or disabled schedules post-upgrade.

Managed Fusion 5.9.12 resolves this issue, ensuring job schedules remain intact across upgrades and ConfigSync behaves predictably in all environments. -

Fixed incorrect image repository for Solr in Helm charts.

In Managed Fusion 5.9.11, the Helm chart specified an internal Lucidworks Artifactory repository for the Solr image.

This has been corrected in 5.9.12 so the Solr image repository is either empty or points tolucidworks/fusion-solr, aligning with other components and simplifying deployment for external environments. -

Added support for configuring the Spark version used by Fusion.

Managed Fusion 5.9.12 now lets your choose whether to use Spark 3.4.1 (introduced in Fusion 5.9.10) or the earlier Spark 3.2.2 version used in Fusion 5.9.9.

This flexibility helps maintain compatibility with legacy Python (3.7.3) and Scala environments, especially for apps that depend on specific Spark runtime behaviors.

When Spark 3.4.1 is enabled, custom Python jobs require Python 3.10.

Contact Lucidworks to request Spark 3.2.2 for your Managed Fusion deployment. -

Fixed incorrect

started-byvalues for datasource jobs in the job history.

In previous versions, datasource jobs started from the Managed Fusion UI were incorrectly shown as started bydefault-subjectinstead of the actual user.

Managed Fusion now correctly records and displays the initiating user in the job history, restoring accurate audit information for datasource operations. -

Fixed a schema loading issue that prevented older apps from working with the Schema API.

Managed Fusion now correctly handles both

managed-schemaandmanaged-schema.xmlfiles when reading Solr config sets, ensuring backward compatibility with apps created before the move to template-based config sets.

This prevents Schema API failures caused by unhandled exceptions during schema file lookup. -

Scheduled jobs now correctly trigger dependent jobs.

In Managed Fusion 5.9.12, we fixed an issue that prevented scheduled jobs from triggering other jobs based on their success or failure status.

This includes jobs configured to run “on_success_or_failure” or using the “Start + Interval” option.

With this fix, dependent jobs now execute as expected, restoring reliable job chaining and scheduling workflows. -

Fixed an issue that prevented updates to existing scheduled job triggers in the Schedulers view.

This bug was caused by inconsistencies in how the API returned UTC timestamps, particularly for times after 12:00 UTC. The Admin UI now correctly detects changes and allows updates to trigger times without requiring the entry to be deleted and recreated. -

Improved reliability of scheduled jobs in the

job-configservice.

This release resolves several issues that could interfere with job scheduling and history visibility in Managed Fusion environments:-

Stronger recovery from infrastructure interruptions: Ensures the scheduler recovers if all

job-configpods briefly lose connection to ZooKeeper. - Correct permission handling: Fixes cases where jobs could not be scheduled due to mismatches between user permissions and service account behavior.

- Restored visibility of system job history: Fixes an issue where system jobs such as delete-old-system-logs and delete-old-job-history were missing from the UI despite running normally in the background.

- Reliable schedule creation in all app states: Fixes an issue where adding a new schedule from the Run dialog appeared to succeed but did not persist the configuration in some apps.

-

Stronger recovery from infrastructure interruptions: Ensures the scheduler recovers if all

-

Fixed a simulation failure in the Index Workbench when configuring new datasources.

Managed Fusion 5.9.12 resolves an issue where Index Workbench failed to simulate results after configuring a new datasource, displaying the error “Failed to simulate results from a working pipeline.” This fix restores full functionality to the Index Workbench, allowing you to preview and configure indexing workflows in one place without switching between multiple views. -

Fixed a bug that caused aborted jobs to appear twice in the job history.

Previously, when you manually aborted a job, it was recorded twice in the job history.

This duplication has been resolved, and each aborted job now appears only once in the history log. -

Fixed an issue that prevented segment-based rule filtering from working correctly in Commerce Studio. Managed Fusion now honors the

lw.rules.target_segmentparameter, ensuring only matching rules are triggered and improving rule targeting and safety. -

This release eliminates extra warning messages in the API Gateway related to undetermined service ports. Previously, the gateway logged repeated warnings about missing

primary-port-namelabels, even though this did not impact functionality. This fix reduces unnecessary log noise and improves the clarity of your logs. -

Fixed in the Managed Fusion 5.9.15 release, this patch was also applied to Managed Fusion 5.9.12:

Added a custom module that serializes Nashorn

undefinedvalues as JSONnull, preventing serialization errors when such values appear in Java objects. When using the OpenJDK version of Nashorn, an exception was thrown when the context contained keys with undefined JavaScript values. This exception occurred because the class for the undefined values was not visible outside of the OpenJDK Nashorn package. A custom object mapper is added for the class so serialization issues are now resolved when handlingundefinedin JavaScript pipeline to run reliably under Java 17.

Known issues

-

UI may incorrectly report

job-configas down

In Managed Fusion 5.9.12 through 5.9.13, thejob-configservice may be flagged as “down” in the UI even when running normally.

This display issue is fixed in Managed Fusion 5.9.14.For thejob-configservice, the Next Run value is updated only after the current job completes. Because the same job cannot run multiple times simultaneously, if another run is scheduled before the current job completes, that run is not executed. -

Jobs and V2 datasources may fail when Managed Fusion collections are remapped to different Solr collections.

In Managed Fusion versions 5.9.12 through 5.9.13, strict validation in thejob-configservice causes “Collection not found” errors when jobs or V2 datasources target Managed Fusion collections that point to differently named Solr collections.

This issue is fixed in Managed Fusion 5.9.14.

As a workaround, use V1 datasources or avoid using REST call jobs on remapped collections. -

Saving large pipelines during high traffic may trigger service instability.

In some environments, saving large query pipelines while handling high traffic loads can cause the Query service to crash with OOM errors due to thread contention.

Managed Fusion 5.9.14 resolves this issue.

If you’re impacted and not yet on this version, contact Lucidworks Support for mitigation options. -

Jobs for Web V2 connectors may fail to start after an earlier failure.

If a Web V2 connector job is interrupted—such as by scaling down the connector pod—the system may enter a corrupted state.

Even after clearing and recreating the datasource, new jobs may fail with the errorThe state should never be null.

This issue is fixed in Fusion 5.9.13. -

The

fusion-spark-3.2.2image in Fusion 5.9.12 may fail to refresh Kubernetes tokens correctly.

In Managed Fusion 5.9.12 environments, Spark jobs that rely on token-based authentication can fail due to a Fabric8 client bug in the 3.2.2 Spark image.

This may impact the stability or execution of long-running jobs. This issue is fixed in Fusion 5.9.13. -

The

job-configservice may incorrectly report aDOWNstatus via/actuator/healtheven when running normally.

When TLS is enabled and ZooKeeper is unavailable for an extended period, thejob-configservice may resume normal operation but continue to reportDOWNon the actuator health endpoint, despite readiness and liveness probes reportingUP.

This issue is fixed in Fusion 5.9.13. -

Web connector may fail to index due to corrupted job state

Managed Fusion running 5.9.12 may fail to index with the Webv2 connector (v2.0.1) due to a corrupted job state in theconnectors-backendservice.

Affected jobs log the errorThe state should never be null, and common remediation steps like deleting the datasource or reinstalling the connector plugin may not resolve the issue.

The issue is fixed in Managed Fusion 5.9.13. -

Saving new datasource schedules may fail silently.

In some Managed Fusion 5.9.12 environments, clicking Save when adding a schedule from the datasource “Run” dialog does not persist the schedule or show an error message, particularly in apps created before the upgrade.

As a workaround, use a new app or manually verify that the job configuration was saved.

This issue is fixed in Managed Fusion 5.9.13.

Removals

For full details on removals, see Deprecations and Removals.- Bitnami removal

By August 28, 2025, Fusion’s Helm chart will reference internally built open-source images instead of Bitnami images due to changes in how they host images. - The Tika Server Parser is removed in this release.

Use the Tika Asynchronous Parser instead. Asynchronous Tika parsing performs parsing in the background. This allows Managed Fusion to continue indexing documents while the parser is processing others, resulting in improved indexing performance for large numbers of documents. - MLeap is removed from the

ml-modelservice. MLeap was deprecated in Managed Fusion 5.2.0 and was no longer used by Managed Fusion.

Platform Support and Component Versions

Kubernetes platform support

Lucidworks has tested and validated support for the following Kubernetes platform and versions:- Google Kubernetes Engine (GKE): 1.29, 1.30, 1.31

Component versions

The following table details the versions of key components that may be critical to deployments and upgrades.| Component | Version |

|---|---|

| Solr | fusion-solr 5.9.12 (based on Solr 9.6.1) |

| ZooKeeper | 3.9.1 |

| Spark | 3.4.1 |

| Ingress Controllers | Nginx, Ambassador (Envoy), GKE Ingress Controller |

| Ray | ray[serve] 2.42.1 |