Urgent action required by November 26, 2025A patch is required by November 26, 2025 for all self-hosted Fusion deployments running on Amazon Elastic Kubernetes Service (EKS). Certain Java versions used by Fusion components reach end of life on this date. Failure to apply the patch will result in compatibility issues.

Instructions for applying the patch

Instructions for applying the patch

The following Fusion services require the

Follow these steps to apply the patched images:

cgroupv2 patch:| Service | Affected Fusion versions | Patch tag |

|---|---|---|

insights | 5.9.4 to 5.9.15 | lucidworks/insights:5.9-cgroupv2-patch |

spark-solr-etl | 5.9.4 to 5.9.11 | lucidworks/spark-solr-etl:5.9-cgroupv2-patch |

keytool-utils | 5.9.4 to 5.9.10 | lucidworks/keytool-utils:5.9-cgroupv2-patch |

- Open your Fusion Helm values file. For Fusion Cloud Native deployments, use the values file for your current deployment. For Helm deployments, use the values file you used to create the deployment.

- For each service listed in the following table that applies to your Fusion version, add or update the image configuration:

- Fusion 5.9.4 to 5.9.10

- Fusion 5.9.11

- Fusion 5.9.12 to 5.9.15

- Save the values file.

-

For Fusion Cloud Native deployments, run the

upgrade_fusion.shscript you used for your current deployment. For Helm deployments, run the following command: - Wait for the affected service pods to restart and verify they are using the patched images.

Platform Support and Component Versions

Kubernetes platform support

Lucidworks has tested and validated support for the following Kubernetes platforms and versions:- Google Kubernetes Engine (GKE): 1.28, 1.29, 1.30

- Microsoft Azure Kubernetes Service (AKS): 1.28, 1.29, 1.30

- Amazon Elastic Kubernetes Service (EKS): 1.28, 1.29, 1.30

Component versions

The following table details the versions of key components that may be critical to deployments and upgrades.| Component | Version |

|---|---|

| Solr | fusion-solr 5.9.6 (based on Solr 9.6.1) |

| ZooKeeper | 3.9.1 |

| Spark | 3.2.2 |

| Ingress Controllers | Nginx, Ambassador (Envoy), GKE Ingress Controller Istio not supported. |

New Features

Support for Open Nashorn

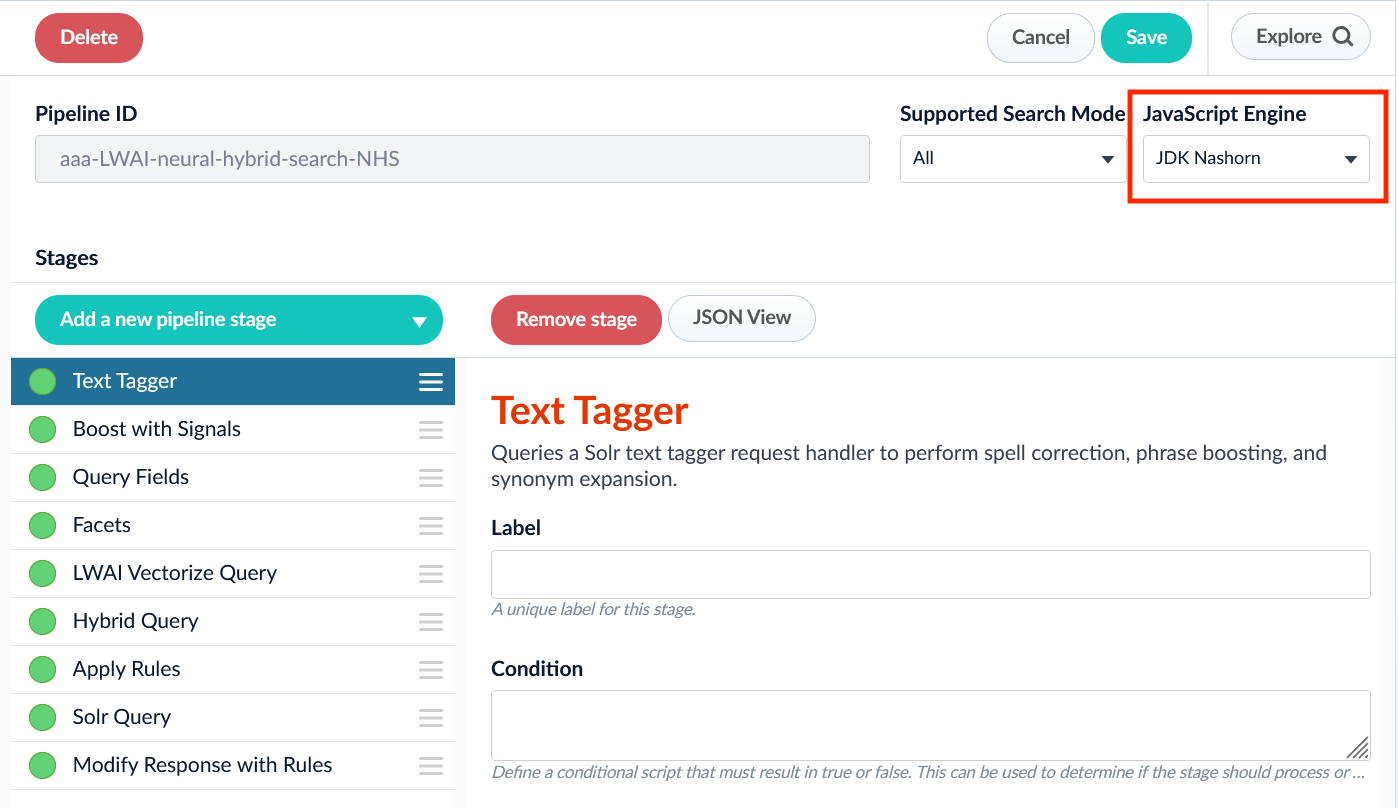

Now your pipeline definitions can include your choice of JavaScript engine, either “Nashorn” or “OpenJDK Nashorn”. You can select the JavaScript engine in the pipeline views. Your JavaScript pipeline stages are interpreted by the selected engine.

While Nashorn is the default option, it is in the process of being deprecated and will eventually be removed, so it is recommended to use OpenJDK Nashorn when possible.

Improvements

- Fusion’s Remote V2 Connectors now support the Amazon Web Services (AWS) Application Load Balancer (ALB) for ingress.

See Configure Remote V2 Connectors for configuration details.

Configure Remote V2 Connectors

Configure Remote V2 Connectors

If you need to index data from behind a firewall, you can configure a V2 connector to run remotely on-premises using TLS-enabled gRPC.The gRPC connector backend is not supported in Fusion environments deployed on AWS.The

Prerequisites

Before you can set up an on-prem V2 connector, you must configure the egress from your network to allow HTTP/2 communication into the Fusion cloud. You can use a forward proxy server to act as an intermediary between the connector and Fusion.The following is required to run V2 connectors remotely:- The plugin zip file and the connector-plugin-standalone JAR.

- A configured connector backend gRPC endpoint.

- Username and password of a user with a

remote-connectorsoradminrole. - If the host where the remote connector is running is not configured to trust the server’s TLS certificate, you must configure the file path of the trust certificate collection.

If your version of Fusion doesn’t have the

remote-connectors role by default, you can create one. No API or UI permissions are required for the role.Connector compatibility

Only V2 connectors are able to run remotely on-premises. You also need the remote connector client JAR file that matches your Fusion version. You can download the latest files at V2 Connectors Downloads.Whenever you upgrade Fusion, you must also update your remote connectors to match the new version of Fusion.

System requirements

The following is required for the on-prem host of the remote connector:- (Fusion 5.9.0-5.9.10) JVM version 11

- (Fusion 5.9.11) JVM version 17

- Minimum of 2 CPUs

- 4GB Memory

Enable backend ingress

In yourvalues.yaml file, configure this section as needed:-

Set

enabledtotrueto enable the backend ingress. -

Set

pathtypetoPrefixorExact. -

Set

pathto the path where the backend will be available. -

Set

hostto the host where the backend will be available. -

In Fusion 5.9.6 only, you can set

ingressClassNameto one of the following:nginxfor Nginx Ingress Controlleralbfor AWS Application Load Balancer (ALB)

-

Configure TLS and certificates according to your CA’s procedures and policies.

TLS must be enabled in order to use AWS ALB for ingress.

Connector configuration example

Minimal example

Logback XML configuration file example

Run the remote connector

logging.config property is optional. If not set, logging messages are sent to the console.Test communication

You can run the connector in communication testing mode. This mode tests the communication with the backend without running the plugin, reports the result, and exits.Encryption

In a deployment, communication to the connector’s backend server is encrypted using TLS. You should only run this configuration without TLS in a testing scenario. To disable TLS, setplain-text to true.Egress and proxy server configuration

One of the methods you can use to allow outbound communication from behind a firewall is a proxy server. You can configure a proxy server to allow certain communication traffic while blocking unauthorized communication. If you use a proxy server at the site where the connector is running, you must configure the following properties:- Host. The hosts where the proxy server is running.

- Port. The port the proxy server is listening to for communication requests.

- Credentials. Optional proxy server user and password.

Password encryption

If you use a login name and password in your configuration, run the following utility to encrypt the password:- Enter a user name and password in the connector configuration YAML.

-

Run the standalone JAR with this property:

- Retrieve the encrypted passwords from the log that is created.

- Replace the clear password in the configuration YAML with the encrypted password.

Connector restart (5.7 and earlier)

The connector will shut down automatically whenever the connection to the server is disrupted, to prevent it from getting into a bad state. Communication disruption can happen, for example, when the server running in theconnectors-backend pod shuts down and is replaced by a new pod. Once the connector shuts down, connector configuration and job execution are disabled. To prevent that from happening, you should restart the connector as soon as possible.You can use Linux scripts and utilities to restart the connector automatically, such as Monit.Recoverable bridge (5.8 and later)

If communication to the remote connector is disrupted, the connector will try to recover communication and gRPC calls. By default, six attempts will be made to recover each gRPC call. The number of attempts can be configured with themax-grpc-retries bridge parameters.Job expiration duration (5.9.5 only)

The timeout value for irresponsive backend jobs can be configured with thejob-expiration-duration-seconds parameter. The default value is 120 seconds.Use the remote connector

Once the connector is running, it is available in the Datasources dropdown. If the standalone connector terminates, it disappears from the list of available connectors. Once it is re-run, it is available again and configured connector instances will not get lost.Enable asynchronous parsing (5.9 and later)

To separate document crawling from document parsing, enable Tika Asynchronous Parsing on remote V2 connectors.Removals

Bitnami removal

Fusion 5.9.6 will be re-released with the same functionality but updated image references. In the meantime, Lucidworks will self-host the required images while we work to replace Bitnami images with internally built open-source alternatives. If you are a self-hosted Fusion customer, you must upgrade before August 28 to ensure continued access to container images and prevent deployment issues. You can reinstall your current version of Fusion or upgrade to Fusion 5.9.14, which includes the updated Helm chart and prepares your environment for long-term compatibility. See Prevent image pull failures due to Bitnami deprecation in Fusion 5.9.5 to 5.9.13 for more information on how to prevent image pull failures.Bug fixes

-

Fixed an issue that caused the API Gateway service to return a

401error code for400errors. - Fixed an issue that passed redacted configuration values to Fusion, resulting in invalid configurations.

- Fixed an issue that sometimes caused intermittent errors in Machine Learning stages.

-

Fixed an issue where the

sourceTypequery parameter was not being conveyed to Lucidworks AI. - Fixed an issue that prevented the correct display of some UI panels for non-admin users.

- Fixed an issue where the names of some of the configuration checkboxes for the Web connector were truncated.

- Fixed an issue with the Web 1.4.0 connector that, in rare cases, prevented the client secret from being saved.

-

Fixed an issue that caused a

Class cannot be created (missing no-arg constructor)error when a response document contained an undefined value.